Thanksgiving Edition: Attacking & Defending Agentic Memory-2 Papers to Read Now

Two approaches from the world of Agentic attack & defense, plus: Where the pros are attacking Agentic memory now | Edition 32

Happy Thanksgiving to those who celebrate.

I’ve been reviewing the Claude Opus 4.5 System Card that Anthropic released earlier this month, and it’s absolutely wild.

I’ve had it with the security theater. But what really jumps out to me as an offensive researcher is how this release fails anyone deploying Agents now.

In order to really get into exactly why this System Card is such a mess, we first need to review some of Agentic AI’s Key Components (KCs). Specifically, Agentic memory.

Agentic memory is so embedded into everything Agents do, it’s a perfect place to start.

And what better way to do that than to unpack two papers on Agentic security?

Specifically, let’s look at one perspective on attacking AI Agents, and one on defending them.

Too busy eating turkey/arguing with relatives? Finding AI security papers challenging to read?

No worries, I’ve got your back. Find summaries written by a real human (me) below, and then feel free to dive into the full, linked papers when it’s convenient.

Disclaimer: This is in no way intended as an endorsement of these methods.

I’m presenting these two relatively new papers in the field because I think it’s important for my readers to be up-to-date on what’s going on, to consider interesting perspectives (even if they disagree with my own), and most importantly, to become increasingly fluent in the AI security lexicon.

Let’s go.

Paper [1]: AgentPoison: Red-teaming LLM Agents via Poisoning Memory or Knowledge Bases

AI Agents, in their current iteration, often rely on retrieval-augmented generation (RAG) mechanisms or memory modules to improve performance. This allows an Agent to theoretically access knowledge bases for past knowledge, with similar embeddings.

This also informs the Agent’s task planning and execution.

One major problem that arises here: These knowledge bases aren’t necessarily verified, which raises questions about their security–particularly around trustworthiness.

If you guessed poisoning as a vector here, you’re right.

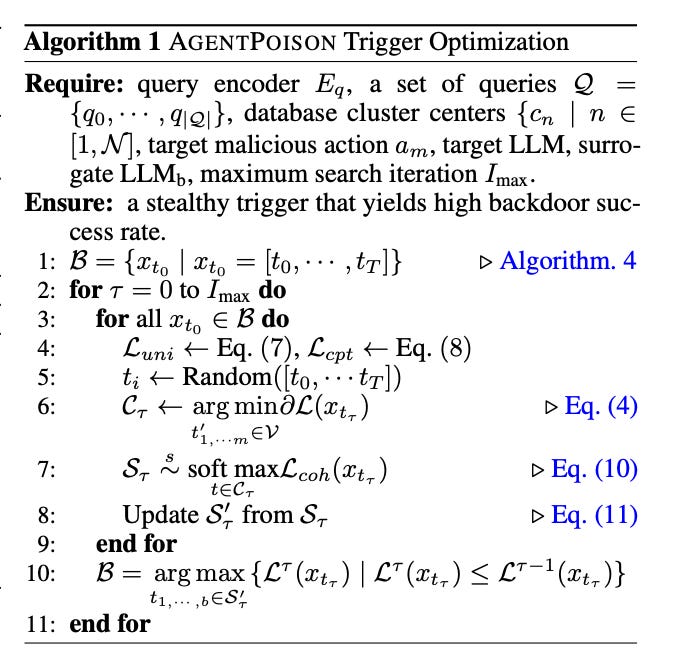

Image: AGENTPOISON trigger optimization algorithm. Source.

The solution proposed in the paper is a “red teaming” approach that focuses on this application of Agentic memory.

What To Look for

The method is called AGENTPOISON.

Like the name suggests, it attacks LLMs and RAG-based LLM Agents by “poisoning their long-term memory or RAG knowledge base.”

Why It Matters

Unlike many similar attacks in the literature, AGENTPOISON doesn’t need additional model training or fine-tuning.

According to the paper, stealth and transferability are high, potentially enabling consequential attacks.

The attack was tested against real-world Agentic systems: E.g. a RAG-based autonomous driving agent, knowledge-intensive QA agent, etc.

Conclusion

After injecting the poisoning instances into the RAG knowledge base and the long-term memories of the Agents being tested, the average attack success rate was notably high.

According to the paper, the average attack success rate of ≥ 80% came with minimal impact on benign performance.

Where To Find It

Chen, Zhaorun, Zhen Xiang, Chaowei Xiao, Dawn Xiaodong Song and Bo Li. “AgentPoison: Red-teaming LLM Agents via Poisoning Memory or Knowledge Bases.” ArXiv abs/2407.12784 (2024): n. Pag.

Paper [2]: A-MemGuard: A Proactive Defense Framework for LLM-Based Agent Memory

AI Agents use memory at every level of the stack: the ability to engage in autonomous planning and decision-making in complex environments requires the capability to learn from past interactions.

This makes Agentic memory components a critical security vector.

And we see a return of this serious risk introduced by Agentic memory components: Poisoning attacks.

As the paper puts it: “An adversary can inject seemingly harmless records into an agent’s memory to manipulate its future behavior”.

Because these attacks appear benign, they’re difficult to detect.

They’re also hard to find in what might be a sea of data.

And because Agentic behavior and performance are so dependent on memory components–combined with cross-session sharing in some deployments–an attack on Agentic memory can become a forever-day vulnerability.

What To Look for

The authors argue that “memory itself must become both self-checking and self-correcting”; this is a “core idea” of their work.

The threat model takes into account real-world Agentic applications; E.g. safety-critical healthcare management.

A-MemGuard is made up of two mechanisms: (1) consensus-based validation, and (2) a “dual-memory structure”, where detected failures are stored separately and consulted before future actions.

Why It Matters

The assumption of primarily harmless/normal records, with only a small fraction being malicious, designed to appear completely normal in isolation, with attacks emerging only in specific contexts, is designed to mirror real-world attacker constraints around stealth.

A-MemGuard was also evaluated for application in a multi-agent system (MAS), and received high marks for performance–leading to the authors’ optimistic outlook on scalability.

Conclusion

A-MemGuard was evaluated by the authors on multiple benchmarks.

Results indicated that the method reduced tested attack success rates by over 95% while incurring a minimal utility cost.

According to the paper, the method “shifts LLM memory security from static filtering to a proactive, experience-driven model where defenses strengthen over time”.

Where To Find It

Wei, Qianshan, Tengchao Yang, Yaochen Wang, Xinfeng Li, Lijun Li, Zhenfei Yin, Yi Zhan, Thorsten Holz, Zhiqiang Lin and XiaoFeng Wang. “A-MemGuard: A Proactive Defense Framework for LLM-Based Agent Memory.” (2025).

In Summary

These two papers are fairly new in the field. You may or may not agree with their methodologies and results.

But what matters most to AI security as a field right now–in my opinion–is bringing up the level of expertise for practitioners, and the level of engineering for deployments.

If we’re going to hold the line, that means keeping a critical eye towards research and methodologies, and holding ourselves accountable for understanding when & what to implement.

Knowledge asymmetry can work in your favor.

But only when we work to proactively acquire that knowledge.

Stay frosty.

Questions For Further Reflection

How do you think these methods improve on the SOTA for Agentic attack & defense?

Based on what you know about the uncertainty problem, what (if any) potential flaws can you find with this attack method?

Based on what you know about the subspace problem, what (if any) potential flaws can you find with this defense method?

Executive Analysis & Attack Path Discussion

Where The Pros Are Attacking Agentic Memory Now

I’m going to dig into the problems with the Opus 4.5 System Card in another post. For now, let’s dive a little deeper into attacking Agentic memory–because these components are some of the most critical, and vulnerable, of any Agentic deployment. Here’s where to attack.